Continuous, Continuous Integration

Last week, I finished implementing our refactor of Hemlock’s file I/O from synchronous system calls to asynchronous io_uring submissions and completions. Firstly, I want to say that io_uring rocks. Building an asynchronous I/O framework on top of it was blissful compared to similar past project experiences with poll and epoll.

Also last week, I found out that Hemlock’s new file I/O implementation breaks our continuous

integration build in GitHub Actions. The reason? The file I/O changes targeted bleeding edge kernel

features, and GitHub Actions’ ubuntu-latest was version 20.04 (nearly two years old now). That

created a problem for us. We were using io_uring kernel features that weren’t available until kernel

version 5.13. GitHub Actions recently made Ubuntu 20.04.3 available, which gave us kernel 5.11. It

was a little short of meeting our needs.

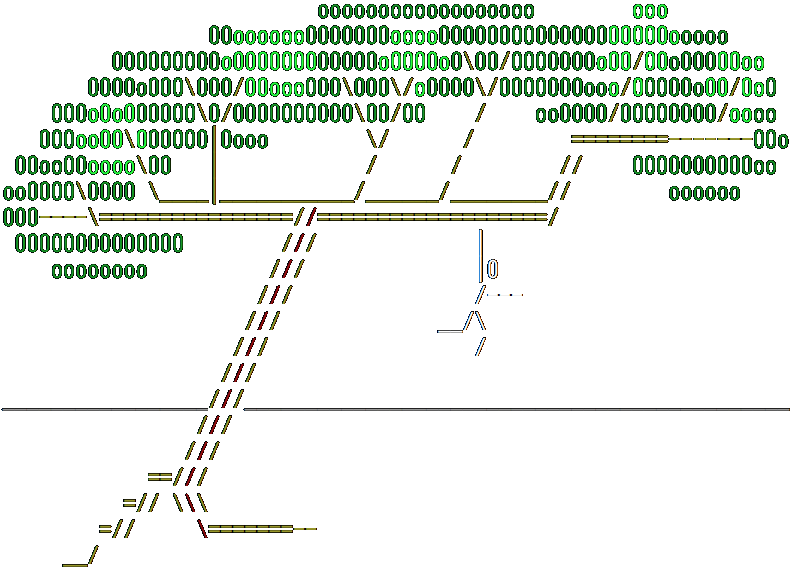

I briefly thought of turning off our C/I and merging the file I/O changes with roughly the following commit message.

Refactor File I/O on top of io_uring.

- Disable C/I because it can't handle io_uring.

- It works on my machine. ¯\_(ツ)_/¯

However, as it was not amateur-hour at BranchTaken, I did not do that. Continuous integration remained continuous and file I/O changes sat patiently in a branch while we modified our C/I. GitHub Actions obviously could not do the job alone, so we had to build and test our pull requests elsewhere. Deciding where was a bit daunting.

We considered the cloud giants. AWS (some mix of EC2, ECS, ECR, Codebuild, or Lambda), Azure, and GCP. Additional solutions we considered were TravisCI and self-hosted GitHub Actions runners on a cloud provider. Of all these, I found that TravisCI was the only one that would not support the latest release of Ubuntu. Given my modest experience with AWS and our eventual need for ARM processing, AWS seemed the obvious choice amongst cloud providers. Said experience led me to estimate a minimum of one week (and to brace for as much as one month) of effort to get everything set up properly, and that I’d probably do it in a way that would need frequent refactors and maintenance. I found the idea distasteful, but it initially seemed the best option.

Fortunately, the GitHub Universe conference was still in our

short-term memory. One of the big takeaways we had was that the gh CLI

is amazingly useful. Just before committing to integrating AWS into our C/I, we realized we might be

able to use the newly-announced gh extension feature

to achieve our goal. Extensions are implemented as bash scripts. The simplicity of it made it

extremely attractive compared to the overwhelming complexity of spinning up new cloud

infrastructure. We could create our own gh push command

to supersede our usage of git push. It would build and run our C/I tests, git push our commit,

and mark the commit status

as success or failure on our GitHub repository. Additionally, Docker is so reliable and

repeatable across different environments that we were okay with just having the extension build and

run our tests in a local docker build command rather than in the cloud. We had the Docker

infrastructure mostly built already since we use it for all of our development. Our old GitHub

Action would then be reduced to verifying that commits have a success status rather than being

wholly responsible for building and testing. Accidentally running git push instead of gh push

would still be fine for us. Commits pushed this way simply wouldn’t have any associated status and

would not be mergeable from a pull request until we used gh push to run C/I tests and write

results to GitHub.

We decided to go the route of the gh push extension, and are quite happy we did. Our C/I is

actually simpler than when we started and we think our testing strategy will take us a long way into

the future. Unabashedly, that testing strategy is, “It works on my machine. ¯\_(ツ)_/¯”.